My blog is migrated to a new place: http://www.gridgain.com/resources/blog

Please update your bookmarks.

My blog is migrated to a new place: http://www.gridgain.com/resources/blog

Please update your bookmarks.

I would like to clarify definitions for the following technologies:

These three terms are, surprisingly, often used interchangeably and yet technically and historically they represent very different products and serve different, sometimes very different, use cases.

It’s also important to note that there’s no specifications or industry standards on what cache, or data grid or database should be (unlike java application servers and JEE, for example). There was and still is an attempt to standardize caching via JSR107 but it has been years (almost a decade) in the making and it is hopelessly outdated by now (I’m on the expert group).

First of all, let me clarify that I am discussing caches, data grids and databases in the context of in-memory, distributed architectures. Traditional disk-based databases and non-distributed in-memory caches or databases are out of scope for this article.

Chronologically, caches, data grids and databases were developed in that order: starting from simple caching to more complex data grids and finally to distributed in-memory databases. The first distributed caches appeared in the late 1990s, data grids emerged around 2002-2003 and in-memory databases have really came to the forefront in the last 5 years.

All of these technologies are enjoying a significant boost in interest in the last couple years thanks to explosive growth in-memory computing in general fueled by 30% YoY price reduction for DRAM and cheaper Flash storage.

Despite the fact that I believe that distributed caching is rapidly going away, I still think it’s important to place it in its proper historical and technical context along with data grids and databases.

The primary use case for caching is to keep frequently accessed data in process memory to avoid constantly fetching this data from disk, which leads to the High Availability (HA) of that data to the application running in that process space (hence, “in-memory” caching).

Most of the caches were built as distributed in-memory key/value stores that supported a simple set of ‘put’ and ‘get’ operations and optionally some sort of read-through and write-through behavior for writing and reading values to and from underlying disk-based storage such as an RDBMS. Depending on the product, additional features like ACID transactions, eviction policies, replication vs. partitioning, active backups, etc. also became available as the products matured.

These fundamental data management capabilities of distributed caches formed the foundation for the technologies that came later and were built on top of them such as In-Memory Data Grids.

The feature of data grids that distinguishes them from distributed caches was their ability to support co-location of computations with data in a distributed context and consequently provided the ability to move computation to data. This capability was the key innovation that addressed the demands of rapidly growing data sets that made moving data to the application layer increasing impractical. Most of the data grids provided some basic capabilities to move the computations to the data.

This new and very disruptive capability also marked the start of the evolution of in-memory computing away from simple caching to a bona-fide modern system of record. This evolution culminated in today’s In-Memory Databases.

The feature that distinguishes in-memory databases over data grids is the addition of distributed MPP processing based on standard SQL and/or MapReduce, that allows to compute over data stored in-memory across the cluster.

Just as data grids were developed in response to rapidly growing data sets and the necessity to move computations to data, in-memory databases were developed to respond to the growing complexities of data processing. It was no longer enough to have simple key-based access or RPC type processing. Distributed SQL, complex indexing and MapReduce-based processing across TBs of data in-memory are necessary tools for today’s demanding data processing.

Adding distributed SQL and/or MapReduce type processing required a complete re-thinking of data grids, as focus has shifted from pure data management to hybrid data and compute management.

GridGain develops an In-Memory Database product as part of its end-to-end in-memory computing suite.

What is common about Oracle and SAP when it comes to In-Memory Computing? Both see this technology as merely a high performance addition to SQL-based database products. This is shortsighted and misses a significant point.

It is interesting to note that as the NoSQL movement sails through the “trough of disillusionment,” traditional SQL and transactional datastores are re-gaining some of the attention. But, importantly, the return to SQL, even based on in-memory technology, is limiting for many newer payload types. In-Memory Computing will play a role which is much more significant than that of a mere SQL database accelerator.

It is interesting to note that as the NoSQL movement sails through the “trough of disillusionment,” traditional SQL and transactional datastores are re-gaining some of the attention. But, importantly, the return to SQL, even based on in-memory technology, is limiting for many newer payload types. In-Memory Computing will play a role which is much more significant than that of a mere SQL database accelerator.

Let’s take high performance computations as an example. Use cases abound: anything from traditional MonteCarlo simulations, video and audio processing, to NLP and image processing software. All can benefit greatly from in-memory processing and gain critical performance improvements – yet for systems like this a SQL database is of little, if any, help at all. In fact, SQL has absolutely nothing to do with these use cases – they require traditional HPC processing along the lines of MPI, MapReduce or MPP — and none of these are features of either Oracle or SAP Hana databases.

Or take streaming and CEP as another example. Tremendous growth in sensory, machine-to-machine and social data, generated in real time, makes streaming and CEP one of the fastest growing use cases for big data processing. Ability to ingest hundreds of thousands of events per seconds and process them in real time has practically nothing to do with traditional SQL databases – but everything to do with in-memory computing. In fact – these systems require a completely different approach of sliding window processing, streaming indexing and complex distributed workflow management – none of which are capabilities of either Oracle or SAP Hana.

Nonetheless, SQL processing was, is, and always will be with us. Ironically, it is now getting back on some of the pundits’ radars. For example, in data warehousing, where Hadoop can be used as a massive data store of record, SQL can play well. In-Memory Computing, however, plays a greater role than just a cache for a large datastore. New payload types require different processing approaches – and all benefit from the dramatic performance improvements brought by in-memory computing.

At GridGain, we are keenly aware of the self evident point: In-Memory Computing is much more significant than just getting a slow SQL database to go faster. Our end-to-end product suite delivers many additional benefits of in-memory computing, handles use cases that are impossible to address in the traditional database world. And there’s so much more to come.

Almost two years ago, Dmitriy and I stood in front of a white board at GridGain’s office thinking: “How can we deliver the real-time performance of GridGain’s in-memory technology to Hadoop customers without asking them rip and replace their systems and without asking them to move their datasets off Hadoop?”.

Given Hadoop’s architecture – the task seemed daunting; and it proved to be one of the more challenging engineering puzzles we have had to solve.

After two years of development, tens of thousands of lines of Java, Scala and C++ code, multiple design iterations, several releases and dozens of benchmarks later, we finally built a product that can deliver real-time performance to Hadoop customers with seamless integration and no tedious ETL. Actual customers deployments can now prove our performance claims and validate our product’s architecture.

Here’s how we did it.

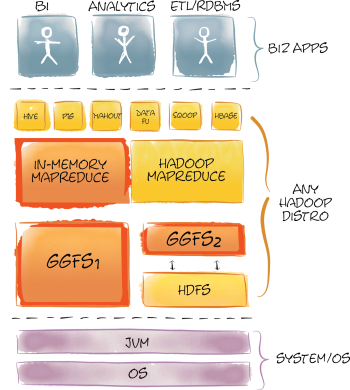

Hadoop is based on two primary technologies: HDFS for storing data, and MapReduce for processing these data in parallel. Everything else in Hadoop and the Hadoop ecosystem sits atop these foundation blocks.

Hadoop is based on two primary technologies: HDFS for storing data, and MapReduce for processing these data in parallel. Everything else in Hadoop and the Hadoop ecosystem sits atop these foundation blocks.

Originally, neither HDFS nor MapReduce were designed with real-time performance in mind. In order to deliver real-time processing without moving data out of Hadoop onto another platform, we had to improve the performance of both of these subsystems.

We decided to develop a high-performance in-memory file system which would provide 100% compatibility with HDFS and an optimized MapReduce implementation which would take advantage of this real-time file system. By doing so, we could offer all the advantages of our in-memory platform while minimizing the disruption of our customers’ existing Hadoop investments.

There are many projects and products that aim to improve Hadoop performance. Projects like HDFS2, Apache Tez, Cloudera Impala, HortonWorks Stinger, ScaleOut hServer and Apache Spark to name but a few, all aim to solve Hadoop performance issues in various ways. GridGain, puts a new spin on some of these approaches delivering unmatched performance gains while fanatically maintaining our commitment to making customers change less code and quickly get the benefits an in-memory computing platform can bring to their big data installations.

From a technology stand point GridGain’s In-Memory Hadoop Accelerator has some similarity to the architecture of Spark (optimized MapReduce), ScaleOut and HDFS2 (in-memory caching without ETL) and some features of Apache Tez (in-process execution), however, GridGain’s In-Memory Accelerator is the only product for Hadoop available today that combines the both the high performance HDFS-compatible file system and optimized in-memory MapReduce along with many other features in one fully integrated product.

First, we implemented GridGain’s In-Memory File System (GGFS) to accelerate I/O in the Hadoop stack. The original idea was that GGFS alone will be enough to gain significant performance increase. However, while we saw significant performance gains using GGFS, when working with our customers we quickly found that there were some not so obvious performance limitations to the way in which Hadoop performs MapReduce. It quickly became clear to us that GGFS alone won’t be enough but it was a critical piece that we needed to build first.

Note that you shouldn’t confuse GGFS with much slower alternatives like RAM disk. GGFS is based on our Memory-First architecture and addresses more than just the seek time of the “device”.

From the get go we designed GGFS to support both Hadoop v1 and YARN Hadoop v2. Further, we designed GGFS to work in two modes:

In primary standalone mode GGFS acts as a bona-fide Hadoop file system that is PnP compatible with the standard HDFS interface. Our customers use it to deploy a high-performance in-memory Hadoop cluster and use it as any other Hadoop file system – albeit one that trades capacity for maximum performance.

One of the great added benefits of the primary mode is that it does away with NamedNode in the Hadoop deployment. Unlike a standard Hadoop deployment that requires shared storage for primary and secondary NameNodes which is usually implemented with a complex NFS setup mounted on each NameNode machine, GGFS seamlessly utilizes GridGain’s In-Memory Database under the hood to provide completely automatic scaling and failover without any need for additional shared storage or risky Single Point Of Failure (SPOF) architectures.

Furthermore, unlike Hadoop’s master-slave design for NamedNodes that prevents Hadoop systems from scaling linearly when adding new nodes, GGFS is built on a highly scalable, natively distributed, partitioned data store which provides linear scalability and auto-discovery of new nodes joining the cluster. Removing NamedNode form the architecture enabled dramatically better performance of IO operations.

GGFS’s primary mode provides maximum performance for IO operations but requires moving data from disk-based HDFS to an memory-based GGFS (i.e. from one file system to another). While data movement may be appropriate for some use cases, we support another operating mode in which absolutely no ETL is required – no need to move data out of HDFS. In this mode, GGFS works as an intelligent secondary in-memory distributed cache over the primary disk-based HDFS file system.

In the second mode, GGFS works as an intelligent secondary in-memory distributed cache over the primary disk-based HDFS file system. In this mode GGFS supports both synchronous and asynchronous read-through and write-through to and from HDFS providing either strong consistency or better performance in exchange for relaxed consistency with absolute transparency to the user and applications running on top of it. In this mode users can manually select which set of files and/or directories should be stored in GGFS and what mode – synchronous or asynchronous – should be used for each one of them for read-through and write-through to and from HDFS.

Another interesting feature of GGFS is its smart usage of block-level or file-level caching and eviction design. When working in primary mode GGFS utilizes file level caching to ensure corruption free storage (the file is either fully in GGFS or not at all). When in secondary mode, GridGain will automatically switch to block-level caching and eviction. What we discovered when working with our customers on real-world Hadoop payloads is that files on HDFS are often accessed not uniformly, i.e. they have significant “locality” in how portions of the file is being accessed. Put another way, certain blocks of a file are accessed more frequently than others. That observation led to our block-level caching implementation for the secondary mode that enables dramatically better memory utilization since GGFS can store only the most frequently used file blocks in memory – and not entire files which can easily measure in 100GBs in Hadoop.

Caching can NOT work effectively without a sophisticated eviction management mechanism to make sure that memory is used optimally. So, we built a new and technically robust eviction mechanism into our platform. Apart from obvious eviction features, you can configure certain files to never be evicted preserving them in memory in all cases for maximum performance, for example.

To ensure seamless and continuous performance during MapReduce file scanning, we’ve implemented smart data prefetching via streaming data that is expected to be read in the nearest future to the MapReduce task ahead of time. By doing so, GGFS ensures that whenever a MapReduce task finishes reading a file block, the next file block is already available in memory. A significant performance boost was achieved here due to our proprietary Inter-Process Communication (IPC) implementation which allows GGFS to achieve throughput of up to 30Gbit/s between two processes.

Table below shows GGFS vs. HDFS (on Flash-based SSDs) benchmark results for raw IO operations:

| Benchmark | GGFS, ms. | HDFS, ms. | Boost, % |

|---|---|---|---|

| File Scan | 27 | 667 | 2470% |

| File Create | 96 | 961 | 1001% |

| File Random Access | 413 | 2931 | 710% |

| File Delete | 185 | 1234 | 667% |

The above tests were performed on a 10-node cluster of Dell R610 blades with Dual 8-core CPUs, running Ubuntu 12.4 OS, 10GBE network fabric and stock unmodified Apache Hadoop 2.x distribution.

As you can see from these results the IO performance difference is quite significant. However, HDFS performance as a file system is only a part of Hadoop’s overhead. Another part, no less significant, is the MapReduce overhead. That is what we addressed with In-Memory MapReduce.

Once we had our high performance in-memory file system built and tested, we turned our attention to a MapReduce implementation that would take advantage of in-memory technology.

Hadoop’s MapReduce design is one of the weakest points in Hadoop. It’s basically a inefficiently designed system when it comes to distributed processing. GridGain In-Memory MapReduce implementation relies heavily on 7 years of experience developing our widely deployed In-Memory HPC product. GridGain’s In-Memory MapReduce is designed on record-based approach vs. key-value approach of traditional MapReduce, and it enables much more streamlined parallel execution path on data stored in in-memory file system.

Furthermore, In-Memory MapReduce eliminates the standard overhead associated with the typical Hadoop job tracker polling, task tracker process creation, deployment and provisioning. All in all – GridGain’s In-Memory MapReduce is a highly optimized HPC-based implementation of the MapReduce concept enabling true low-latency data processing of data stored in GGFS.

The diagram below demonstrates the difference between a standard Hadoop MapReduce execution path and GridGain’s In-Memory MapReduce execution path:

As seen in this diagram our MapReduce implementation supports direct execution path from client to data node. Moreover, all execution in GridGain happens in-process with deployment handled automatically and transparently by GridGain.

In-Memory MapReduce also provides integration capability for MapReduce code written in any Hadoop supported language and not only in native Java or Scala. Developers can easily reuse existing C/C++/Python or any other existing MapReduce code with our In-Memory Accelerator for Hadoop to gain significant performance boost.

Finally, since we can remove task and job tracker polling, out of process execution, and the often unnecessary shuffling and sorting from MapReduce while letting our products work with a high-performance in-memory file system we can start seeing 10x – 100x performance increases on typical MapReduce payloads. This is not just theory, our tests and our customers can confirm this.

Below are the results for one of the internal tests that utilizes both In-Memory File System and In-Memory MapReduce. This test was specifically designed to show maximum GridGain’s Accelerator performance vs. stock Hadoop distribution for heavy I/O MapReduce jobs:

| Nodes | Hadoop, ms. | Hadoop + GridGain Accelerator, ms. | Boost, % |

|---|---|---|---|

| 5 | 298,000 | 11,622 | 2,564% |

| 10 | 201,350 | 5,537 | 3,636% |

| 15 | 158,997 | 2,385 | 6,667% |

| 20 | 122,008 | 1,647 | 7,407% |

| 30 | 97,833 | 1,174 | 8,333% |

| 40 | 82,771 | 780 | 10,612% |

Tests were performed on a cluster of Dell R610 blades with Dual 8-core CPUs, running Ubuntu 12.4 OS, 10GBE network fabric and stock unmodified Apache Hadoop 2.x distribution and GridGain 5.2 release.

No serious distributed system can be used without comprehensive DevOps support and In-Memory Accelerator for Hadoop comes with a comprehensive unified GUI-based management and monitoring tool called GridGain Visor. Over the last 12 months we’ve added significant support in Visor for Hadoop Accelerator.

Visor provides deep DevOps capabilities including an operations & telemetry dashboard, database and compute grid management, as well as GGFS management that provides GGFS monitoring and file management between HDFS, local and GGFS file systems.

As part of GridGain Visor, In-Memory Accelerator For Hadoop also comes with a GUI-based file system profiler, which allows you to keep track of all operations your GGFS or HDFS file systems make and identifies potential hot spots.

GGFS profiler tracks speed and throughput of reads, writes, various directory operations, for all files and displays these metrics in a convenient view which allows you to sort based on any profiled criteria, e.g. from slowest write to fastest. Profiler also makes suggestions whenever it is possible to gain performance by loading file data into in-memory GGFS.

After almost 2 years of development we have a well rounded product that can help you accelerate Hadoop MapReduce up to 100x times with minimal integration and effort. It’s based on our innovative high-performance in-memory file system and in-memory MapReduce implementation coupled with one of the best management and monitoring tools.

If you want to be able to say words “milliseconds” and “Hadoop” in one sentence – you need to take a serious look at GridGain’s In-Memory Hadoop Accelerator.

We are happy to announce the general availability release for GridGain 5.2 which includes updates to all products in the platform:

We anticipate this being the last mid-point release in the platform before we roll out 6.0 line Q114 or Q214 (we are still planning to have bi-weekly service releases going forward as usual).

During past months we’ve been working very diligently to improve the general usability of our products: from first impressions, to POCs, to production use. Despite the fact that GridGain has enjoyed a stellar record on this front for years – the platform’s size is growing rapidly (we are now at almost 4x size of the entire Hadoop codebase, for example) – and we need to make sure that size and complexity don’t overshadow the simplicity and usability our products enjoyed so far.

We’ve added many features and enhancements: better error messages, automatic configuration conflict detection, automatic backward compatibility checks, and better documentation.

Work in this direction will continue. We listen to our customers and pay attention to how they use our products. We make improvements every sprint.

One of the biggest improvement in the last 6 months is performance for non-transactional use cases. GridGain has been winning every benchmark when it comes to distributed ACID transactions – but we haven’t had same winning margins when it came to simpler, non-transactional payloads.

It’s fixed now.

We are currently running over 50 benchmarks against every competitive database and data grid products (all seven of them) and currently are winning over 95% of them with some as much as 3-4x. That includes 100% of distributed ACID transactional use cases and most of of the non-transactional use cases (EC, simple automicity, local-only transactions, etc.)

GridGain still holds a record of achieving 1 Billion TPS on 10 commodity Dell R610 blades. The records was achieved in a open tender and is verifiable. No other product has yet achieved this level of performance.

There’s plenty of exciting stuff that we’ve been working on for the past 6-9 months that will be made public early next year when GridGain 6.0 platform will roll out. Some features have trickled out to the public – but most have been kept tight for the next release.

As always, grab your free download of GridGain at http://www.gridgain.com/download and check out our constantly growing documentation center for all your screencasts, videos, white papers, and technical documentation: http://www.gridgain.com/documentation

In the last 12 months we observed a growing trend that use cases for distributed caching are rapidly going away as customers are moving up stack… in droves.

Let me elaborate by highlighting three points that when combined provide a clear reason behind this observation.

In the last 3-5 years traditional RDBMSs and new crop of simpler NewSQL/NoSQL databases have mastered the in-memory caching and now provide comprehensive caching and even general in-memory capabilities. MongoDB and CouchDB, for example, can be configured to run mostly in-memory (with plenty caveats but nonetheless). And when Oracle 12 and SAP HANA are in the game (with even more caveats) – you know it’s a mainstream already.

There’s simply less reasons today for just caching intermediate DB results in memory as data sources themselves do a pretty decent job at that, 10GB network is often fast enough and much faster IB interconnect is getting cheaper. Put it the other way, performance benefits of distributed caching relative to the cost are simpler not as big as they were 3-5 years ago.

Emerging “Caching The Cache” anti-pattern is a clear manifestation of this conundrum. And this is not only related to historically Java-based caching products but also to products like Memcached. It’s no wonder that Java’s JSR107 has been such a slow endeavor as well.

In the same time as customers moving more and more payloads to in-memory processing they are naturally starting to have bigger expectations than the simple key/value access or full-scan processing. As MPP style of processing on large in-memory data sets becoming a new “norm” these customers are rightly looking for advanced clustering, ACID distributed transactions, complex SQL optimizations, various forms of MapReduce – all with deep sub-second SLAs – as well as many other features.

Distributed caching simply doesn’t cut it: it’s a one thing to live without a distributed hash map for your web sessions – but it’s completely different story to approach mission critical enterprise data processing without transactional data center replication, comprehensive computational and data load balancing, SQL support or complex secondary indexes for MPP processing.

Apples and oranges…

And not only customers move more and more data to in-memory processing but their computational complexity grows as well. In fact, just storing data in-memory produces no tangible business value. It is the processing of that data, i.e. computing over the stored data, is what delivers net new business value – and based on our daily conversations with prospects the companies across the globe are getting more sophisticated about it.

Distributed caches and to a certain degree data grids missed that transition completely. While concentrating on data storage in memory they barely, if at all, provide any serious capabilities for MPP or MPI-based or MapReduce or SQL-based processing of the data – leaving customers scrambling for this additional functionality. What we are finding as well is that just SQL or just MapReduce, for instance, is often not enough as customers are increasingly expecting to combine the benefits of both (for different payloads within their systems).

Moreover, the tight integration between computations and data is axiomatic for enabling “move computations to the data” paradigm and this is something that simply cannot be bolted on existing distributed cache or data grid. You almost have to start form scratch – and this is often very hard for existing vendors.

And unlike the previous two points this one hits below the belt: there’s simply no easy way to solve it or mitigate it.

So, what’s next? I don’t really know what the category name will be. May be it will be Data Platforms that would encapsulate all these new requirements – may be not. Time will tell.

At GridGain we often call our software end-to-end in-memory computing platform. Instead of one do-everything product we provide several individual but highly integrated products that address every major type of payload of in-memory computing: from HPC, to streaming, to database, and to Hadoop acceleration.

It is an interesting time for in-memory computing. As a community of vendors and early customers we are going through our first serious transition from the stage where simplicity and ease of use were dominant for the early adoption of the disruptive technology – to a stage where growing adaption now brings in the more sophisticated requirements and higher customer expectations.

As vendors – we have our work cut out for us.

Excellent quick overview of GridGain Visor – unified administration, management and monitoring tool for GridGain’s suite of in-memory computing products. For those keeping score – this is one of the GridGain components that is written 100% in Scala from the ground up. Enjoy!

Or – direct link on Vimeo.

As any fast growing technology In-Memory Computing has attracted a lot of interest and writing in the last couple of years. It’s bound to happen that some of the information gets stale pretty quickly – while other is simply not very accurate to being with. And thus myths are starting to grow and take hold.

I want to talk about some of the misconceptions that we are hearing almost on a daily basis here at GridGain and provide necessary clarification (at least from our our point of view). Being one of the oldest company working in in-memory computing space for the last 7 years we’ve heard and seen all of it by now – and earned a certain amount of perspective on what in-memory computing is and, most importantly, what it isn’t.

Let’s start at… the beginning. What is the in-memory computing? Kirill Sheynkman from RTP Ventures gave the following crisp definition which I like very much:

“In-Memory Computing is based on a memory-first principle utilizing high-performance, integrated, distributed main memory systems to compute and transact on large-scale data sets in real-time – orders of magnitude faster than traditional disk-based systems.”

The most important part of this definition is “memory-first principle”. Let me explain…

Memory-first principle (or architecture) refers to a fundamental set of algorithmic optimizations one can take advantage of when data is stored mainly in Random Access Memory (RAM) vs. in block-level devices like HDD or SSD.

RAM has dramatically different characteristics than block-level devices including disks, SSDs or Flash-on-PCI-E arrays. Not only RAM is ~1000x times faster as a physical medium, it completely eliminates the traditional overhead of block-level devices including marshaling, paging, buffering, memory-mapping, possible networking, OS I/O, and I/O controller.

Let’s look at example: say you need to read a single record in your program.

In in-memory context your code will be compiled to interact with memory controller and read it directly from local RAM in the exact format you need (i.e. your object representation in particular programming language) – in most cases that will result in a simple pointer arithmetic. If you use proper vectorized execution technique – you’ll often read it from L2 cache of your CPUs. All in all – we are talking about nanoseconds and this performance is guaranteed for all cases.

If you read the same record form block-level device – you are in for a very different ride… Your code will have to deal with OS I/O, buffered read, I/O controller, seek time of the device, and de-marshaling back the byte stream that you get from it to an object representation that you actually need. In worst case scenario – we’re talking dozen milliseconds. Note that SSDs and Flash-on-PCI-E only improves portion of the overhead related to seek time of the device (and only marginally).

Taking advantage of these differences and optimizing your software accordingly – is what memory-first principle is all about.

Now, let’s get to the myths.

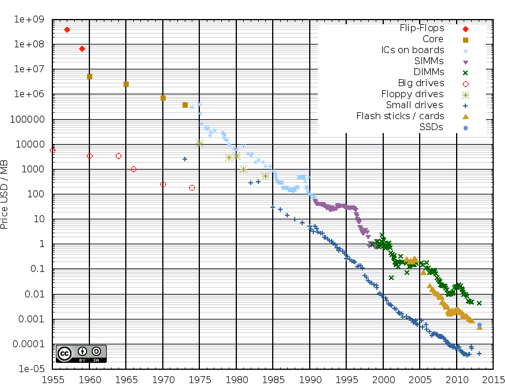

This is one of the most enduring myths of in-memory computing. Today – it’s simply not true. Five or ten years ago, however, it was indeed true. Look at the historical chart of USD/MB storage pricing to see why:

The interesting trend is that price of RAM is dropping 30% every 12 months or so and is solidly on the same trajectory as price of HDD which is for all practical reasons is almost zero (enterprises care more today about heat, energy, space than a raw price of the device).

The price of 1TB RAM cluster today is anywhere between $20K and $40K – and that includes all the CPUs, over petabyte of disk based storage, networking, etc. CIsco UCS, for example, offers very competitive white-label blades in $30K range for 1TB RAM setup: http://buildprice.cisco.com/catalog/ucs/blade-server Smart shoppers on eBay can easily beat even the $20K price barrier (as we did at GridGain for our own recent testing/CI cluster).

In a few years from now the same 1TB TAM cluster setup will be available for $10K-15K – which makes it all but commodity at that level.

And don’t forget about Memory Channel Storage (MCS) that aims to revolutionize storage by providing the Flash-in-DIMM form factor – I’ve blogged about it few weeks ago.

This myths is based on a deep rooted misunderstanding about in-memory computing. Blame us as well as other in-memory computing vendors as we evidently did a pretty poor job on this subject.

The fact of the matter is – almost all in-memory computing middleware (apart from very simplistic ones) offer one or multiple strategies for in-memory backups, durable storage backups, disk-based swap space overflow, etc.

More sophisticated vendors provide a comprehensive tiered storage approach where users can decide what portion of the overall data set is stored in RAM, local disk swap space or RDBMS/HDFS – where each tier can store progressively more data but with progressively longer latencies.

Yet another source of confusion is the difference between operational datasets and historical datasets. In-memory computing is not aimed at replacing enterprise data warehouse (EDW), backup or offline storage services – like Hadoop, for example. In-memory computing is aiming at improving operational datasets that require mixed OLTP and OLAP processing and in most cases are less than 10TB in size. In other words – in-memory computing doesn’t suffer from all-or-nothing syndrome and never requires you to keep all data in memory.

If you consider the totally of the data stored by any one enterprise – the disk still has a clear place as a medium for offline, backup or traditional EDW use cases – and thus the durability is there where it always has been.

The variations of this myth include the following:

Mounting RAM disk is a very poor way of utilizing memory from every technical angle (see above).

As far as SSDs – for some uses cases – the marginal performance gain that you can extract from flash storage over spinning disk could be enough. In fact – if you are absolutely certain that the marginal improvements is all you ever need for a particular application – the flash storage is the best bet today.

However, for a rapidly growing number of use cases – speed matters. And it matters more and for more businesses every day. In-memory computing is not about marginal 2-3x improvement – it is about giving you 10-100x improvements enabling new businesses and services that simply weren’t feasible before.

There’s one story that I’ve been telling for quite some time now and it shows a very telling example of how in-memory computing relates to speed…

Around 6 years ago GridGain had a financial customer who had a small application (~1500 LOC in Java) that took 30 seconds to prepare a chart and a table with some historical statistical results for a given basket of stocks (all stored in Oracle RDBMS). They wanted to put it online on their website. Naturally, users won’t wait for half a minute after they pressed the button – so, the task was to make it around 5-6 seconds. Now – how do you make something 5 times faster?

We initially looked at every possible angle: faster disks (even SSD which were very expensive then), RAID systems, faster CPU, rewriting everything in C/C++, running on different OS, Oracle RAC – or any combination of thereof. But nothing would make an application run 5x faster – not even close… Only when we brought the the dataset in memory and parallelized the processing over 5 machines using in-memory MapReduce – we were able to get results in less than 4 seconds!

The morale of the story is that you don’t have to have NASA-size problem to utilize in-memory computing. In fact, every day thousands of businesses solving performance problem that look initially trivial but in the end could only be solved with in-memory computing speed.

Speed also matters in the raw sense as well. Look at this diagram from Stanford about relative performance of disks, flash and RAM:

As DRAM closes its pricing gap with flash such dramatic difference in raw performance will become more and more pronounced and tangible for business of all sizes.

This is one of those mis-conceptions that you hear mostly from analysts. Most analysts look at SAP HANA, Oracle Exalytics or something like QlikView – and they conclude that this is all that in-memory computing is all about, i.e. database or in-memory caching for faster analytics.

There’s a logic behind it, of course, but I think this is rather a bit shortsighted view.

First of all, in-memory computing is not a product – it is a technology. The technology is used to built products. In fact – nobody sells just “in-memory computing” but rather products that are built with in-memory computing.

I also think that in-memory databases are important use case… for today. They solve a specific use case that everyone readily understands, i.e. faster system of records. It’s sort of a low hanging fruit of in-memory computing and it gets in-memory computing popularized.

I do, however, think that the long term growth for in-memory computing will come from streaming use cases. Let me explain.

Streaming processing is typically characterized by a massive rate at which events are coming into a system. Number of potential customers we’ve talked to indicated to us that they need to process a sustained stream of up to 100,000 events per second with out a single event loss. For a typical 30 seconds sliding processing window we are dealing with 3,000,000 events shifting by 100,000 every second which have to be individually indexed, continuously processed in real-time and eventually stored.

This downpour will choke any disk I/O (spinning or flash). The only feasible way to sustain this load and corresponding business processing is to use in-memory computing technology. There’s simply no other storage technology today that support that level of requirements.

So we strongly believe that in-memory computing will reign supreme in streaming processing.

Jon Webster’s presentation at inaugural Bay Area In-Memory Computing Meetup:

Or – direct link on Vimeo.

I picked this chapter up from the GridGain’s Document Center. I like it as it gives simple, plain English high-level overview of our In-Memory Database: no coding, no diagrams, no deep dives. Just quick and easy rundown of what’s there…

GridGain IMDB is a distributed, Java-based, object-based key-value datastore. Logically it can be viewed as a collection of one or more caches (a.k.a maps or dictionaries). Each cache is a distributed collection of key-value pairs. Both key and value are represented as Java object and can be of any user-defined type.

Every cache must be pre-configured individually and cannot be created on the fly (due to distributed consistency semantics). You’ll find that cache and cache projections will be your main API entry points while working with GridGain IMDB in embedded mode.

Each cache has many configuration properties with the main one being its type. GridGain IMDB supports three cache types: local, replicated and partitioned.

As name implies the local mode stores all data locally without any distribution providing lightweight transactional local storage. Replicated cache replicates (copies) data to all nodes in the cluster resulting in best high availability but reducing overall database in-memory capacity since data is copied everywhere. Partitioned mode is the most scalable mode as it equally partitions data across all nodes in the cluster so that each node is only responsible for a small portion of the data.

Combination of these storage modes in a single database (as well as many specific configuration and optimization properties available for each mode) make GridGain IMDB very convenient distributed datastore as it doesn’t force you to use just one specific storage model.

GridGain IMDB stores data in layered storage system that consists of 4 layers: JVM on-heap memory, JVM off-heap memory, local disk-based swap space, and optional durable cache store. Each layer can store more data but entails progressively higher latencies for data access. Developer has full control over configuration of these layers.

Another interesting characteristic of GridGain IMDB is that it was developed first as a highly distributed system and only later it became a full fledged database. This reversed approach makes data and processing distribution a natural capability of the database.

GridGain IMDB is based on unique HyperClustering technology that enables GridGain IMDB scale to 1000s of nodes in a single transactional topology (based on actual production customers).

GridGain IMDB clustering is based on peer-to-peer topology, its transaction implementation is based on advanced MVCC-based design, and its partitioning is based on automatic multilayer consistent hashing implementation – free from sharding limitations or other crude data distribution approaches.

One of the most unique characteristics of GridGain IMDB is the full integration of In-Memory HPC at the core of the database.

Many traditional RDBMS and No/NewSQL databases only address data storage and rudimentary data processing. In this scenario the data is retrieved from the database and has to be moved to some other processing node. Once data is processed, it is usually discarded.

Such data movement between different layers, even minimal, is almost always at the core of the scalability and performance problems in highly distributed systems.

GridGain IMDB was designed from the ground up to minimize unnecessary data movements and instead move computations to the data whenever possible – hence its integration of HPC technology is at the very core of the database. Computations are dramatically smaller in size – often by factor of 1000x, they don’t change as often as the data, have strong and easily defined affinity to the data they require, and typically provide only negligible load on network and JVMs.

What is even more important – this approach allows for clean processing parallelization of data stored in the database since the computing task can now be intelligently split into sub-tasks that can be sent to remote nodes to work in parallel on their respective local data sub-sets with absolutely zero global resource contention.

GridGain IMDB supports MapReduce, distributed SQL, MPP, MPI, RPC, File System, and Document API type of data processing and querying – the deepest and the widest eco-system of HPC processing paradigms provided by any database or HPC framework.

GridGain IMDB can be queried and programmed in many different ways. In external context you can use Java, C++, or C# drivers. GridGain IMDB also natively supports custom REST and Memcached protocol.

In embedded mode you can use distributed SQL and JDBC as well as Lucene, Text and full-scan queries. For complex data computations you can use in-memory MapReduce, MPP, RPC and MPI-based processing. All programming techniques in embedded mode have deeply customizable APIs including distributed extensions to SQL, Java or Scala-based custom SQL functions, streaming MapReduce, distributed continuations, connected tasks support, etc.

GridGain IMDB also provides in-memory file system (GGFS – GridGain File System) as well as full support for MongoDB Document API protocol.

Unlike many traditional, NewSQL and NoSQL databases GridGain IMDB is designed to be easily programmable in embedded mode.

Traditional (external) approach dictates that database should be deployed separately and the data processing applications access it through some networking protocol and client library (i.e. the driver). This implies significant driver overhead and data movement that makes any HPC or real-time database processing impossible as we discussed above.

While supporting the external access as well via its C++, .NET, and Java drivers – GridGain IMDB also natively supports embedded mode where data processing logic can be deployed directly into the database itself and therefore can be programmatically accessed in the same process. In other words, GridGain IMDB allows to initiate a distributed data processing task right from the database process itself removing any driver overhead and its significant API limitations – enabling rich functionality and sub-millisecond response for complex distributed data processing tasks.

Among many benefits, this is becoming critically important capability for rapidly growing machine-to-machine and streaming use cases that don’t have human interaction delays built in and require minimal latencies and linear horizontal scalability.

GridGain IMDB provides advanced capabilities when it comes to fault tolerance and durability.

Each cache can be configured with one or more active backups which provides data redundancy when a node crashes as well as improved performance in read-mostly scenarios. On topology changes (node leaves or joins) the comprehensive pre-loading subsystem will make sure that data is synchronously or asynchronously re-partitioned while maintaining the desired consistency and availability. Each cache can be independently configured for transactional read-through and write-through to a durable storage such as RDBMS, HDFS, or file system to make sure that data is backed up in durable datastore, if required.

In case of network segmentation, a.k.a. “split-brain” problem, GridGain IMDB provides pluggable segmentation resolution architecture where dirty writes or reads are impossible regardless of how segmented your cluster gets.

For complex and mission critical deployments GridGain IMDB provides data center replication. When data center replication is turned on, GridGain IMDB will automatically make sure that each data center is consistently backing up its data to other data centers (there can be more than one). GridGain supports both active-active and active-passive modes for replication.

GridGain IMDB has full support for distributed transactions supporting all ACID properties including support for Optimistic and Pessimistic concurrency levels and READ_COMMITTED, REPEATABLE_READ, and SERIALIZABLE isolation levels.

For JEE environments, like application servers, GridGain IMDB provides automatic integration with JTA/XA. Essentially GridGain becomes an XA resource and will automatically check if there is an active JTA transaction present.

In addition to transactions where GridGain IMDB allows to execute multiple data operations atomically, GridGain also supports single atomic CAS (compare-and-set) operations, such as put-if-absent, compare-and-set, and compare-and-remove.

For more information head over to GridGain’s In-Memory Database.