Almost two years ago, Dmitriy and I stood in front of a white board at GridGain’s office thinking: “How can we deliver the real-time performance of GridGain’s in-memory technology to Hadoop customers without asking them rip and replace their systems and without asking them to move their datasets off Hadoop?”.

Given Hadoop’s architecture – the task seemed daunting; and it proved to be one of the more challenging engineering puzzles we have had to solve.

After two years of development, tens of thousands of lines of Java, Scala and C++ code, multiple design iterations, several releases and dozens of benchmarks later, we finally built a product that can deliver real-time performance to Hadoop customers with seamless integration and no tedious ETL. Actual customers deployments can now prove our performance claims and validate our product’s architecture.

Here’s how we did it.

The Idea – In-Memory Hadoop Accelerator

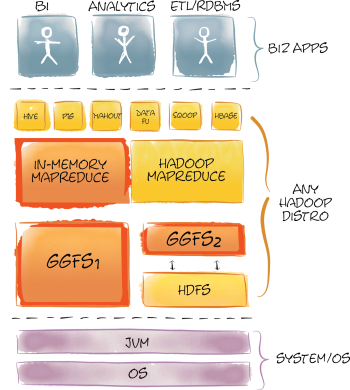

Hadoop is based on two primary technologies: HDFS for storing data, and MapReduce for processing these data in parallel. Everything else in Hadoop and the Hadoop ecosystem sits atop these foundation blocks.

Hadoop is based on two primary technologies: HDFS for storing data, and MapReduce for processing these data in parallel. Everything else in Hadoop and the Hadoop ecosystem sits atop these foundation blocks.

Originally, neither HDFS nor MapReduce were designed with real-time performance in mind. In order to deliver real-time processing without moving data out of Hadoop onto another platform, we had to improve the performance of both of these subsystems.

We decided to develop a high-performance in-memory file system which would provide 100% compatibility with HDFS and an optimized MapReduce implementation which would take advantage of this real-time file system. By doing so, we could offer all the advantages of our in-memory platform while minimizing the disruption of our customers’ existing Hadoop investments.

There are many projects and products that aim to improve Hadoop performance. Projects like HDFS2, Apache Tez, Cloudera Impala, HortonWorks Stinger, ScaleOut hServer and Apache Spark to name but a few, all aim to solve Hadoop performance issues in various ways. GridGain, puts a new spin on some of these approaches delivering unmatched performance gains while fanatically maintaining our commitment to making customers change less code and quickly get the benefits an in-memory computing platform can bring to their big data installations.

From a technology stand point GridGain’s In-Memory Hadoop Accelerator has some similarity to the architecture of Spark (optimized MapReduce), ScaleOut and HDFS2 (in-memory caching without ETL) and some features of Apache Tez (in-process execution), however, GridGain’s In-Memory Accelerator is the only product for Hadoop available today that combines the both the high performance HDFS-compatible file system and optimized in-memory MapReduce along with many other features in one fully integrated product.

In-Memory File System

First, we implemented GridGain’s In-Memory File System (GGFS) to accelerate I/O in the Hadoop stack. The original idea was that GGFS alone will be enough to gain significant performance increase. However, while we saw significant performance gains using GGFS, when working with our customers we quickly found that there were some not so obvious performance limitations to the way in which Hadoop performs MapReduce. It quickly became clear to us that GGFS alone won’t be enough but it was a critical piece that we needed to build first.

Note that you shouldn’t confuse GGFS with much slower alternatives like RAM disk. GGFS is based on our Memory-First architecture and addresses more than just the seek time of the “device”.

From the get go we designed GGFS to support both Hadoop v1 and YARN Hadoop v2. Further, we designed GGFS to work in two modes:

- Primary (standalone), and

- Secondary (caching HDFS).

In primary standalone mode GGFS acts as a bona-fide Hadoop file system that is PnP compatible with the standard HDFS interface. Our customers use it to deploy a high-performance in-memory Hadoop cluster and use it as any other Hadoop file system – albeit one that trades capacity for maximum performance.

One of the great added benefits of the primary mode is that it does away with NamedNode in the Hadoop deployment. Unlike a standard Hadoop deployment that requires shared storage for primary and secondary NameNodes which is usually implemented with a complex NFS setup mounted on each NameNode machine, GGFS seamlessly utilizes GridGain’s In-Memory Database under the hood to provide completely automatic scaling and failover without any need for additional shared storage or risky Single Point Of Failure (SPOF) architectures.

Furthermore, unlike Hadoop’s master-slave design for NamedNodes that prevents Hadoop systems from scaling linearly when adding new nodes, GGFS is built on a highly scalable, natively distributed, partitioned data store which provides linear scalability and auto-discovery of new nodes joining the cluster. Removing NamedNode form the architecture enabled dramatically better performance of IO operations.

GGFS’s primary mode provides maximum performance for IO operations but requires moving data from disk-based HDFS to an memory-based GGFS (i.e. from one file system to another). While data movement may be appropriate for some use cases, we support another operating mode in which absolutely no ETL is required – no need to move data out of HDFS. In this mode, GGFS works as an intelligent secondary in-memory distributed cache over the primary disk-based HDFS file system.

In the second mode, GGFS works as an intelligent secondary in-memory distributed cache over the primary disk-based HDFS file system. In this mode GGFS supports both synchronous and asynchronous read-through and write-through to and from HDFS providing either strong consistency or better performance in exchange for relaxed consistency with absolute transparency to the user and applications running on top of it. In this mode users can manually select which set of files and/or directories should be stored in GGFS and what mode – synchronous or asynchronous – should be used for each one of them for read-through and write-through to and from HDFS.

Another interesting feature of GGFS is its smart usage of block-level or file-level caching and eviction design. When working in primary mode GGFS utilizes file level caching to ensure corruption free storage (the file is either fully in GGFS or not at all). When in secondary mode, GridGain will automatically switch to block-level caching and eviction. What we discovered when working with our customers on real-world Hadoop payloads is that files on HDFS are often accessed not uniformly, i.e. they have significant “locality” in how portions of the file is being accessed. Put another way, certain blocks of a file are accessed more frequently than others. That observation led to our block-level caching implementation for the secondary mode that enables dramatically better memory utilization since GGFS can store only the most frequently used file blocks in memory – and not entire files which can easily measure in 100GBs in Hadoop.

Caching can NOT work effectively without a sophisticated eviction management mechanism to make sure that memory is used optimally. So, we built a new and technically robust eviction mechanism into our platform. Apart from obvious eviction features, you can configure certain files to never be evicted preserving them in memory in all cases for maximum performance, for example.

To ensure seamless and continuous performance during MapReduce file scanning, we’ve implemented smart data prefetching via streaming data that is expected to be read in the nearest future to the MapReduce task ahead of time. By doing so, GGFS ensures that whenever a MapReduce task finishes reading a file block, the next file block is already available in memory. A significant performance boost was achieved here due to our proprietary Inter-Process Communication (IPC) implementation which allows GGFS to achieve throughput of up to 30Gbit/s between two processes.

Table below shows GGFS vs. HDFS (on Flash-based SSDs) benchmark results for raw IO operations:

| Benchmark | GGFS, ms. | HDFS, ms. | Boost, % |

|---|---|---|---|

| File Scan | 27 | 667 | 2470% |

| File Create | 96 | 961 | 1001% |

| File Random Access | 413 | 2931 | 710% |

| File Delete | 185 | 1234 | 667% |

The above tests were performed on a 10-node cluster of Dell R610 blades with Dual 8-core CPUs, running Ubuntu 12.4 OS, 10GBE network fabric and stock unmodified Apache Hadoop 2.x distribution.

As you can see from these results the IO performance difference is quite significant. However, HDFS performance as a file system is only a part of Hadoop’s overhead. Another part, no less significant, is the MapReduce overhead. That is what we addressed with In-Memory MapReduce.

In-Memory MapReduce

Once we had our high performance in-memory file system built and tested, we turned our attention to a MapReduce implementation that would take advantage of in-memory technology.

Hadoop’s MapReduce design is one of the weakest points in Hadoop. It’s basically a inefficiently designed system when it comes to distributed processing. GridGain In-Memory MapReduce implementation relies heavily on 7 years of experience developing our widely deployed In-Memory HPC product. GridGain’s In-Memory MapReduce is designed on record-based approach vs. key-value approach of traditional MapReduce, and it enables much more streamlined parallel execution path on data stored in in-memory file system.

Furthermore, In-Memory MapReduce eliminates the standard overhead associated with the typical Hadoop job tracker polling, task tracker process creation, deployment and provisioning. All in all – GridGain’s In-Memory MapReduce is a highly optimized HPC-based implementation of the MapReduce concept enabling true low-latency data processing of data stored in GGFS.

The diagram below demonstrates the difference between a standard Hadoop MapReduce execution path and GridGain’s In-Memory MapReduce execution path:

As seen in this diagram our MapReduce implementation supports direct execution path from client to data node. Moreover, all execution in GridGain happens in-process with deployment handled automatically and transparently by GridGain.

In-Memory MapReduce also provides integration capability for MapReduce code written in any Hadoop supported language and not only in native Java or Scala. Developers can easily reuse existing C/C++/Python or any other existing MapReduce code with our In-Memory Accelerator for Hadoop to gain significant performance boost.

Finally, since we can remove task and job tracker polling, out of process execution, and the often unnecessary shuffling and sorting from MapReduce while letting our products work with a high-performance in-memory file system we can start seeing 10x – 100x performance increases on typical MapReduce payloads. This is not just theory, our tests and our customers can confirm this.

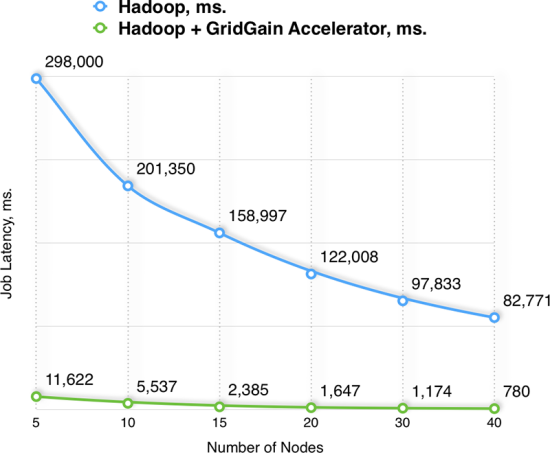

Below are the results for one of the internal tests that utilizes both In-Memory File System and In-Memory MapReduce. This test was specifically designed to show maximum GridGain’s Accelerator performance vs. stock Hadoop distribution for heavy I/O MapReduce jobs:

| Nodes | Hadoop, ms. | Hadoop + GridGain Accelerator, ms. | Boost, % |

|---|---|---|---|

| 5 | 298,000 | 11,622 | 2,564% |

| 10 | 201,350 | 5,537 | 3,636% |

| 15 | 158,997 | 2,385 | 6,667% |

| 20 | 122,008 | 1,647 | 7,407% |

| 30 | 97,833 | 1,174 | 8,333% |

| 40 | 82,771 | 780 | 10,612% |

Tests were performed on a cluster of Dell R610 blades with Dual 8-core CPUs, running Ubuntu 12.4 OS, 10GBE network fabric and stock unmodified Apache Hadoop 2.x distribution and GridGain 5.2 release.

Management and Monitoring

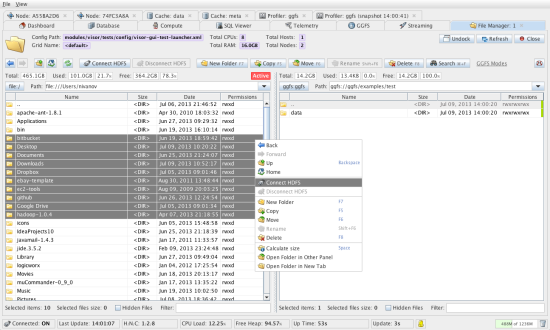

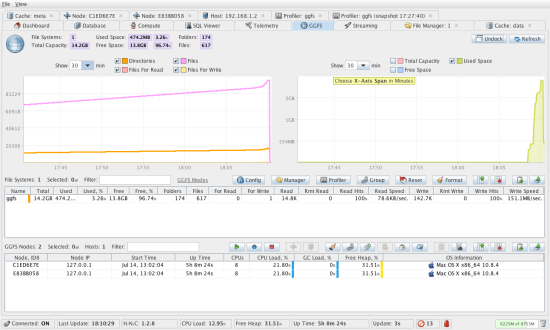

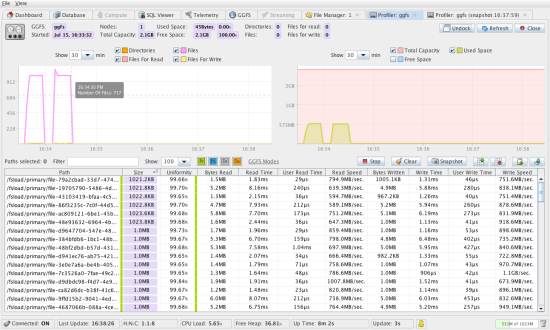

No serious distributed system can be used without comprehensive DevOps support and In-Memory Accelerator for Hadoop comes with a comprehensive unified GUI-based management and monitoring tool called GridGain Visor. Over the last 12 months we’ve added significant support in Visor for Hadoop Accelerator.

Visor provides deep DevOps capabilities including an operations & telemetry dashboard, database and compute grid management, as well as GGFS management that provides GGFS monitoring and file management between HDFS, local and GGFS file systems.

As part of GridGain Visor, In-Memory Accelerator For Hadoop also comes with a GUI-based file system profiler, which allows you to keep track of all operations your GGFS or HDFS file systems make and identifies potential hot spots.

GGFS profiler tracks speed and throughput of reads, writes, various directory operations, for all files and displays these metrics in a convenient view which allows you to sort based on any profiled criteria, e.g. from slowest write to fastest. Profiler also makes suggestions whenever it is possible to gain performance by loading file data into in-memory GGFS.

Conclusion

After almost 2 years of development we have a well rounded product that can help you accelerate Hadoop MapReduce up to 100x times with minimal integration and effort. It’s based on our innovative high-performance in-memory file system and in-memory MapReduce implementation coupled with one of the best management and monitoring tools.

If you want to be able to say words “milliseconds” and “Hadoop” in one sentence – you need to take a serious look at GridGain’s In-Memory Hadoop Accelerator.

What did you use for the illustrations? They look cool.